Robust Collaborative Inference with Vertically Split Data Over Dynamic Device Environments

Abstract

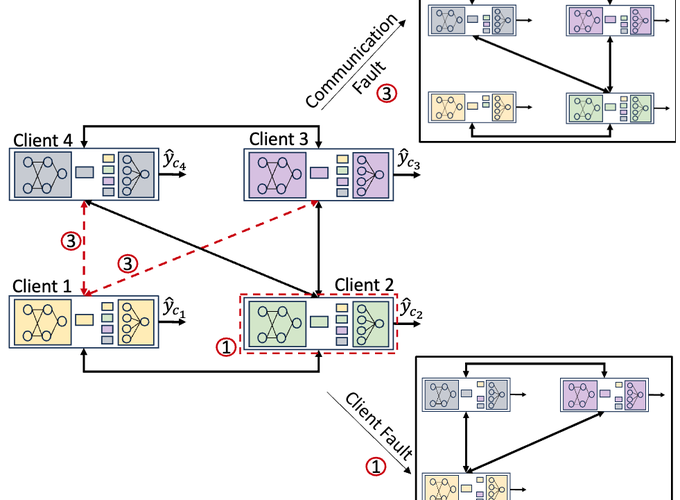

Many intelligence tasks operate over networks where observations are vertically split across devices, necessitating collaborative inference. Existing collaborative learning approaches, such as Vertical Federated Learning (VFL), typically implicitly assume the existence of an architecture that is reasonably fault-tolerant, e.g., a star topology to an aggregator node that never fails. However, in practice, device networks may be decentralized and possess dynamic connectivity, making them susceptible to catastrophic faults (e.g., environmental disruptions, extreme weather). In this work, we study the problem of enabling robust collaborative inference over these decentralized, dynamic, and fault-prone networks. We first formulate the impact of faults on collaborative inference through a notion of dynamic risk for the data and network context. Then, we develop Multiple Aggregation with Gossip Rounds and Simulated Faults (MAGS) which synthesizes three features to enhance fault tolerance during inference: (i) fault simulation via dropout in training, (ii) replication of aggregators across devices, and (iii) gossip layers to produce an ensemble inference. We provide theoretical insights into why each of these components enhances robustness, e.g., proving that the gossip protocol reduces dynamic risk according to prediction diversity. We conduct extensive evaluations over five datasets and different network configurations, which validate that MAGS substantially improves robustness over VFL baselines. The code is available at: https://github.com/inouye-lab/MAGS_Distributed_Robust_Learning